Many books and articles have been written about penetration testing. This topic is becoming increasingly relevant with each new IS incident. Criminals penetrate the networks of various organizations with the aim of directly stealing money from accounts (banks, financial institutions), denial-of-service attacks (critical infrastructure enterprises), stealing personal data and other malicious actions. The information security systems of most organizations are not able to fully protect against the actions of qualified attackers. There are several reasons for this. One of them is insufficient effectiveness of protection systems and insufficiently strict adjustment of the means included in it. An attempt to cover part of the regulatory requirements with organizational measures instead of technical ones often leads to a decrease in the overall effectiveness of the protection system. And finally, threat models cannot give precise recommendations on how your network will be breached. Penetration testing allows you to see your network breach through the eyes of a hacker. After all, being on the defense side, you may simply not know or not notice some points that will allow a hacker to easily penetrate your network.

For example, many “old” security experts often underestimate the threats that come from social engineering and hybrid attacks. Of course, penetration testing is also unlikely to show 100% of all kinds of attack vectors, because there can always be zero-day vulnerabilities that no one but the attackers themselves know about. But it is quite possible to assess the general level of security, to understand which means of protection are lacking in practice and which settings still need to be tweaked based on the results of the pentest. And as a result, the requirements for the protection system are supplemented by the results of the pentest and possibly some additional requirements, such as fault tolerance.

I hope that in my extensive introduction I was able to make the case for the need for penetration testing to create a truly effective defense system.

So now we can safely proceed to the practical part. We will divide penetration testing into several parts. The pentest will consist of a technical part and social engineering. For our part, we will divide the technical part into external and internal pentest.

This division of the technical part can be explained by the types of violators from which the threat may come. By an internal trespasser we mean intruders who are directly in the controlled territory or have legal access to it. We will consider all others external. That is, we will have both a hacker breaking the network via the Internet and a hacker who is in the network of a group of companies, but does not have any legal access to the corporate network of this organization.

So, let’s start. First of all, let’s talk about what information can be collected about the attacked organization and its resources on the Internet. We will assume that we know the name of the organization, the official website and the contact information of some employees. Let’s start with the site. Using the https://whois.ru/ or similar service, you can get complete information about who this resource is registered to, which DNS servers are used (along the way, you can understand which cloud provider the resource is hosted on).

We have learned something about the official resources of the organization, but we still do not know where the website is located: on the site of the provider or in the data center of the organization, because many companies place their web resources directly on the sites of hosting providers in order not to maintain the web resources themselves. So we have the IP address of the website, let’s try to find out who owns the pool that this address belongs to. We use the https://2ip.ru/whois service, specifying the name of the site or the previously obtained IP. In the IP range field, we can observe the entire subnet in which our address is included, and in the Provider name field, we can see to whom this address pool is registered. In the example on the screenshot, the corporate site is located on the site of a cloud provider.

What to do next with this information? If the site is located on the site of a hosting provider, then with a high probability we will not be able to develop the attack further, because very often corporate web resources perform purely marketing functions, displaying information about the company. In this case, the web portal, as a rule, does not interact with the corporate network of the organization itself. There are, of course, exceptions when any information is exported from a database located in the corporate network and then displayed on the site. So, it is still necessary to look for vulnerabilities on the site, but you should not count on the fact that you will be able to get from the site to the corporate network.

If, as in our screenshot, the site is hosted by a cloud provider and at the same time a large pool of addresses is rented (well, at least a few dozen), there is a chance that not only the web server is located in this subnet, but also other corporate resources. Well, if the IP address of the web server is included in the pool of addresses rented directly by the tested company, then the chances of penetrating the network through the site are quite high.

Continuing the conversation about external subnets leased by customers, there are a few more ways to find out IP addresses owned by a company. To begin with, we use the nslookup utility to find out the MX records for this domain. As I mentioned at the beginning of the article, we know several email addresses, for example [email protected]. We need to write something to this address and definitely get a response. Next, in any mail client, we view the letter in the RFC-822 format.

Here you can see a lot of interesting things. First of all, we can find out the IP address of the sender’s server in the Sender field. Antivirus and antispam can also leave their marks here. In this example, Yandex’s antispam stood out. This information can help us later to penetrate the network. The IP address of the sender’s server should ideally match the MX record we found using nslookup, but there are variations, so it’s best to check.

In addition, there is another way to find out a company’s IP address. Now it’s hard to find a corporate email system that disables HTML when viewing an email. Otherwise, everyone really likes the corporate logo in the signature of the letter, background and markup in the text. We will use this. With the help of HTML, you can place a tag in the letter that opens a graphic file with a size of 1 pixel, which has the color of the background of the letter. For example, a white pixel. When opening the letter, the web server will be contacted with this file and the external IP address from which the request was made will be recorded in the logs.

There are paid services that implement similar functionality. But in principle, you can implement logging of requests yourself, for this you only need a web server with an external IP. It is not necessary to register a DNS name, you can refer to the file at the address.

And on the server, we look at the access log file, for me it is /var/log/apache2/access.log. It displays the address and time of access to the file. Thus, we can find out the IP address of the gateway used in the organization to access the Internet.

Before moving on, let’s summarize a little. So far, we have managed to gather information about the various external resources used by the organization. It is not only a corporate website, but also leased address pools and access gateways. Based on this information, we can already get a general idea of the network interaction between the organization’s services.

It’s no secret that many web pages contain vulnerabilities, but not all of them can be used to further develop an attack. For example, changing the content of the site pages can, of course, cause some damage to the image of the organization, but no more. Therefore, we will not consider all vulnerabilities from the OWASP TOP 10, but will talk only about the main points of information collection. First of all, let’s try to find vulnerabilities on the site itself. To do this, we will use the free Nikto scanner, which is part of Kali Linux. We do not forget about the need to use a VPN to hide your actions on this resource. My test victim is http://testphp.vulnweb.com/ developed by Acunetix for testing vulnerability scanners. In the received report, we may be interested in the disclosure of various files and information about the software versions used.

The OTUS blog has a detailed article on working with Nikto https://habr.com/ru/company/otus/blog/492546/.

In addition to searching for vulnerabilities, you can try to go through the directories on the web server. Very often, web server administrators leave any directories (admin panels, old versions of the site, backups, etc.) available for reading, which are not linked from the main site.

However, these directories can be found using special utilities. I’m using dirb from Kali Linux. As a result of the search, we found the Admin directory. With the help of such a search, you can get a general idea of what engine the website is running on. For example, the presence of a large number of wp* directories indicates the use of WordPress.

By the way, sometimes it is possible to find a folder like Upload, which can also contain a lot of interesting information. Well, you should not neglect the usual search engines. For example, Yandex has a Saved copy option. This option is useful when some page is no longer available, and you really want to know what was there. And of course we remember the good old Internet archive https://archive.org/.

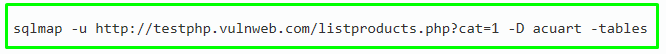

Another classic vulnerability from the OWASP TOP-10 is SQL injection. The essence of this attack is to be able to perform your own query to the database and gain access to closed information. To search for such vulnerabilities, we will use the sqlmap utility. In the given screenshot, sqlmap managed to detect two MySQL databases: acuart and information_schema. For example, let’s see which tables are part of acuart.

To do this, let’s run the following command:

But the external pentest is not limited to the website. I would like to remind you that the website can be located on a third-party site, have no direct connections with corporate resources, and compromising the entire web server along with root capture will not lead to the further development of an attack on the internal network. Although pentesters will have something to write about in the external testing report.

We still have previously found subnets leased by this organization. First of all, they can be scanned for open ports and detection of the used software. To do this, you can use the nmap and masscan utilities. In my example I will use masscan. The scanned subnet and port range must be specified on the input.

I scanned the web host’s subnet, so they all have ports 80 open. In fact, for all the apparent inefficiency of port scans for external subnets, you can actually get some pretty interesting results, such as RDP and SSH looking outwards. And if in the case of SSH, authentication by certificates is often used and it is generally useless to select a password for these nodes, then in the case of RDP, you can achieve much more. For example, you can try to pick a password, look for vulnerabilities, etc.

And at the end of the topic of the external pentest, I will remind you about one more world-famous tool – this is the shodan.io resource. This site is essentially an online port scanner. By specifying the IP address, you can get information about open ports on the resource and running services.